Fever and Meds Tracker

Overview

When my son got sick recently, it became clear that our family needed a simple way to collectively enter and track his temperature readings, medication times, and doses of medicines like acetaminophen (Tylenol), ibuprofen, and cough syrup. I wanted to build, test, and “ship” a minimal app that would allow easy submission and checking of these pieces of information so everybody could have a shared resource to keep up-to-date. For data entry, I imagined a family member quickly dictating the subject line of an email with information phrased however they wanted and pressing send. The goal was to create something useful in under a day, ideally under 4 hours, which pushed me to focus on efficiency and prioritize the most essential features to ensure we could start using it immediately.

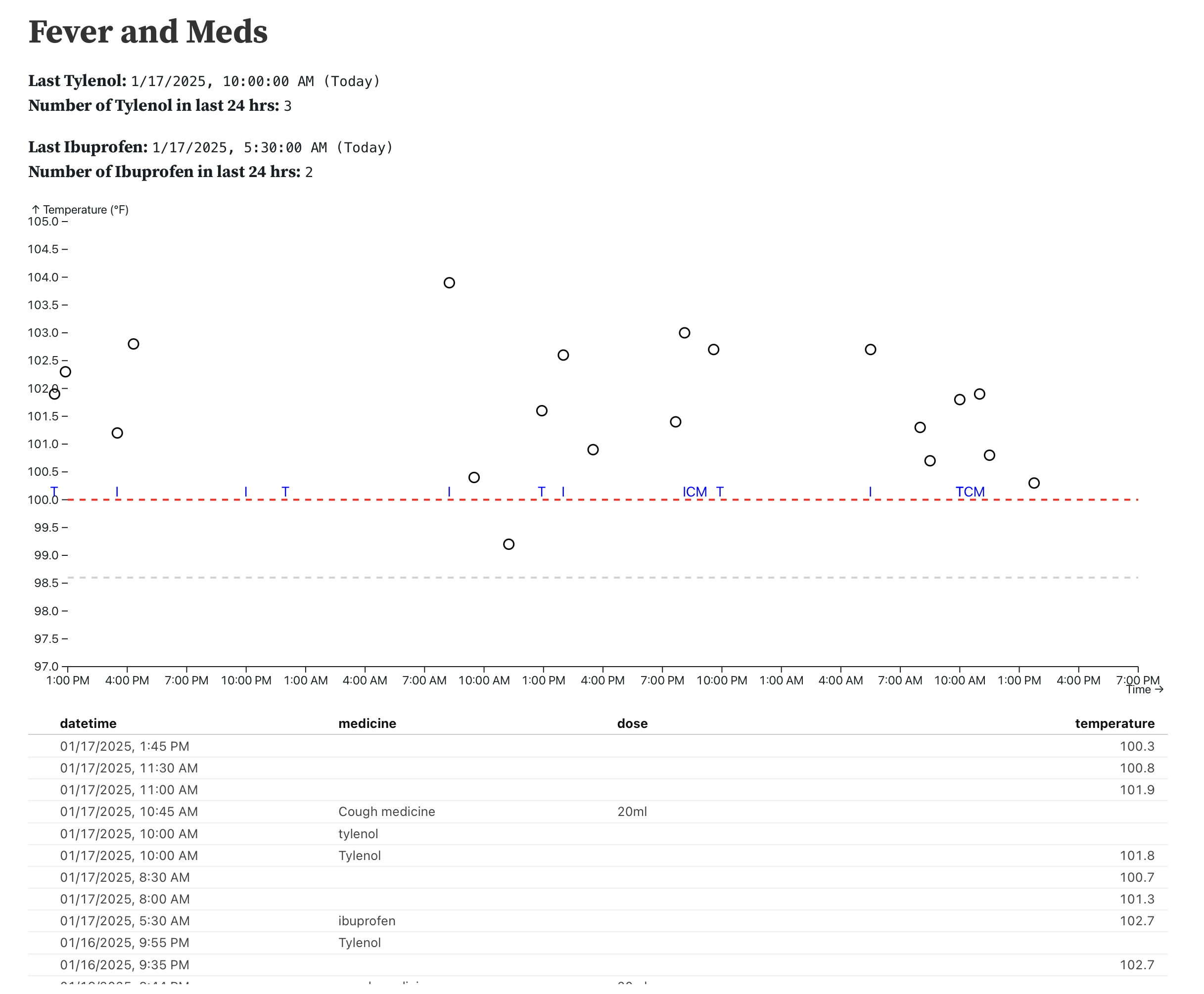

Here is the dashboard portion (a web page) of what I ended up with:

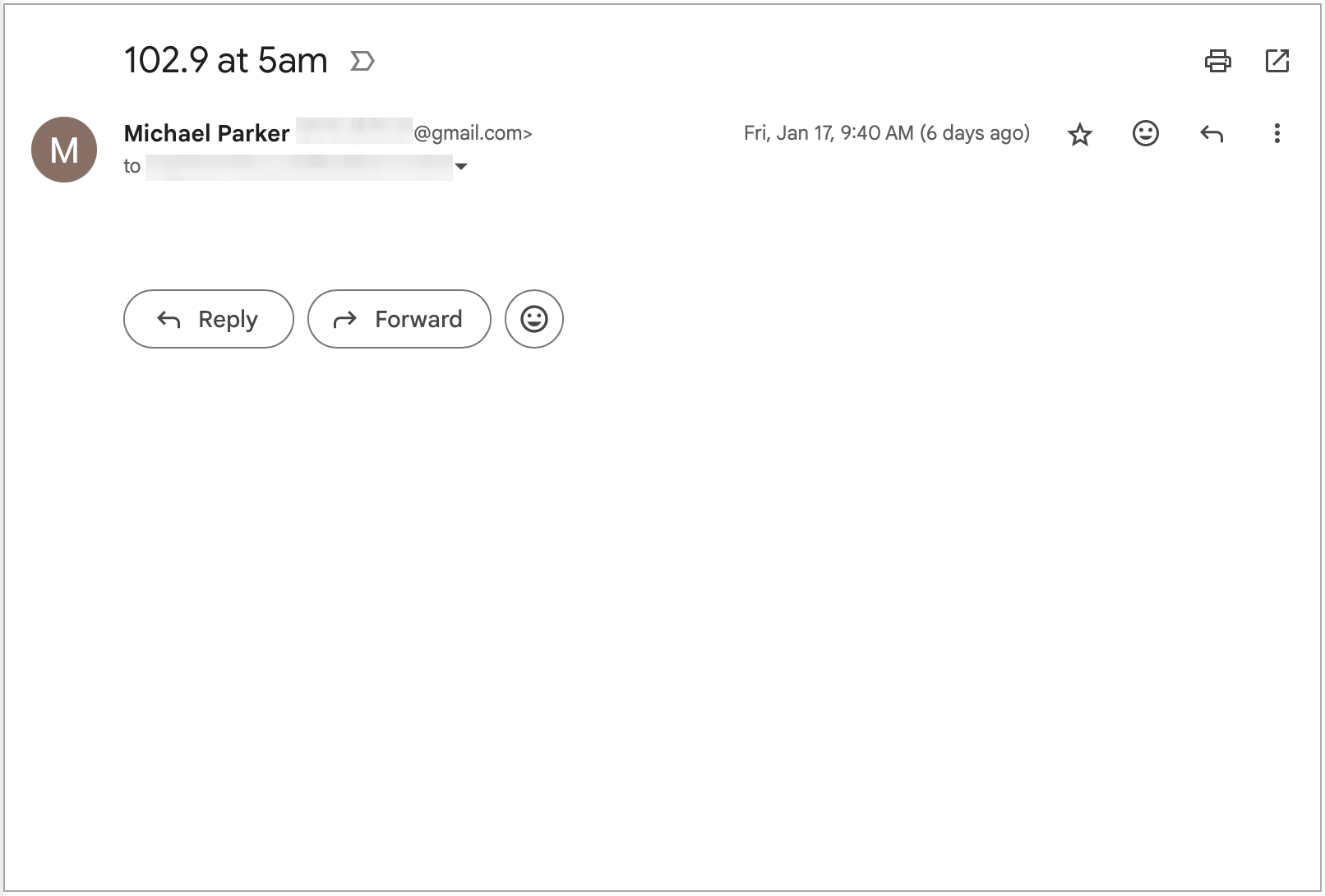

…and here are a couple of examples of how input occurs by sending an email to a custom bot with an unstructured subject line that can include one or more of time, medicine, dose, temperature:

Build

The basic design was that someone could send an email with a subject line like “101 and 1 of tylenol” or “cough medicine at 5am”, with some combination of meds, dose, time, and temperature, to a special email address. When this address receives an email, it inspects the subject line and extracts structured data. That data is then entered along with the specified time (or the time of email receipt) in UTC in a SQLite database. Family members can view the dashboard web page to see this info in realtime. The dashboard uses the url endpoint of the database to pull and display the data, synthesizing useful information like the time of last dose for certain meds like Tylenol that should not exceed some number of doses in 24 hours but that can be alternated with other classes of medicine like ibuprofen.

I was aware of val.town, a serverless platform for hosting JavaScript functions, having used it once before for developing a mini-app to do with automating weight tracking. I remembered it as being something requiring very little setup time, so I decided to try that to get things up and running quickly. I used Townie, their AI assistant, to create a first draft for a “val” (what the serverless functions on that platform are called) with the right imports for receiving an email. I then fleshed this out for the following workflow in the function:

- Receive an email and get the subject line information

- Call OpenAI’s gpt-4o-mini to extract the right structured fields (like temperature) from the email subject line, resulting in a JSON object.

- Store the JSON object info in a SQLite database (that I separately set up in val.town with a table with corresponding fields)

- Send a confirmation email to my personal email address showing the JSON object that was created, along with the subject line that was initially sent. Not strictly necessary, but good for initial validation/confirmation.

Time Zones

I made the mistake of storing local time without a time zone specfication in my first pass. I then tried having Claude help me write SQLite to update the table, converting from local time to UTC. Claude got very confused, repeatedly coming up with output that was off by many hours, and cheerfully explaining what it thought the misstep was each time. After a few attempts, I had gpt-4o try and it one-shotted a working version. It’s not always easy to tell ahead of time where a given model might struggle on a particular problem.

LLM Prompt Iteration

The other part that took a little time was getting the LLM prompt working well. I thought the structured extraction task wasn’t too complicated so used gpt-4o-mini (plus it’s free to call in val.town below a certain rate!), with structured output specified by a Zod object. For the prompt, starting with a very simple one-line prompt worked reasonably but kept making mistakes with the time zone portion (sometimes giving local time and sometimes UTC time). I iterated with the OpenAI meta-prompt to quickly arrive at a more complete system prompt with examples (assumes US Eastern for local for this prototype):

Extract the fever entry information. Current time is ${utcString} (${localTime}).

Any times mentioned are in Eastern Time (UTC-5).

Fields:

- datetime: Convert time to UTC ISO8601 format (YYYY-MM-DD'T'HH:mm:ssZ)

- medicine: medication name or empty string if not present

- dose: dosage or empty string if not present

- temperature: temperature reading or empty string if not present

If only time is given, use today's date. If no time is given, use current time.

Examples:

Input: "Tylenol 9pm"

{

"datetime": "2025-01-18T02:00:00Z", // 9pm ET = 2am UTC next day

"medicine": "Tylenol",

"dose": "",

"temperature": ""

}

Input: "Fever 101.2 at 3pm Jan 16"

{

"datetime": "2025-01-16T20:00:00Z", // 3pm ET = 8pm UTC

"medicine": "",

"dose": "",

"temperature": "101.2"

}

Input: "2 tsp motrin" // no time given, use current time

{

"datetime": "2025-01-17T14:30:00Z",

"medicine": "motrin",

"dose": "2 tsp",

"temperature": ""

}

Evals

Given the small scale of this project, I didn’t think evals weren’t strictly necessary (beyond initial testing to make sure we had accurate information, of course!) but decided to see if I could creatively leverage Gemini’s video understanding. If this were being turned into an app, I would add logging/LLM observability to better track rather than doing this extraction process via video after the fact. This was more for fun to see Gemini’s capabilties. I filtered my email to just the confirmation emails and took a shaky, hand-held iPhone video of my screen while I scrolled through the emails. Here’s a little bit of what it looked like:

I then gave the video to Gemini, along with specific instructions of what I was doing and what I wanted extracted and how I wanted it turned into a CSV. I also gave it the task of comparing the extracted JSON (what had been extracted by gpt-4o-mini, shown in the body of the email confirmations in the video) to the original subject line (what is shown after “AI Analysis of:” in the video above) plus the time of confirmation email receipt (shown on the right side of the email) and adding true or false to a columnn in the outputted CSV, depending on whether the JSON values were extracted correctly.

Here is my prompt if you’re interested. I could possibly have been more succinct but wanted to make sure the model had the context it needed. This is in a chat interface, so the prompt invites a bit of back-and-forth here if necessary.

I have built an app to track fever recordings and medications given to my child while he's sick. To do this, I send an email to my automation email address, including some combination of temp, time, dose, medicine in there depending on what was done (temperature taken, medicine given, etc.). This goes in the subject line. When this is received, I have an LLM extract a JSON object version of the data, with fields datetime, medicine, dose, and temperature. The user, if including a time, puts the time in local time (US Eastern, which is utc-5). The model, based on the prompt, converts this to utc to store in a sqlite db. Then an email is sent to me recording what was sent (in the subject line as "AI Analysis of: 11:30am, 100.8f" for example, with what comes after the colon as what was sent. Then in the body of the email, the JSON object that the model extracted is shown. I took a video of me paging through all the emails received so far. I'd like to get the data out (the sent entry and the json object) so I can evaluate how many times the model was correct. Can you extract this information into csv format if I give you the video? Ideally you would also add another column that says whether the output was correct or not. Note that the time that this confirmation email is sent to me may be up to 3 or 4 minutes after the time I sent the information in, so you would need a bit of tolerance in calling something correct. Sound good?

Gemini asked me a couple of clarifying questions and then did a great job of extracting the info into a CSV with rows like this (partial version):

| Original Subject | Confirmation Time | JSON Output | Correct | Notes |

|---|---|---|---|---|

| 100.8 on 1/15 8am | Fri, Jan 17, 9:42 AM (1 day ago) | {"datetime":"2025-01-15T13:00:00Z","medicine":"","dose":"","temperature":"100.8"} | TRUE | promptv_02 |

| 1 tylenol | Fri, Jan 17, 9:41 AM (1 day ago) | {"datetime":"2025-01-17T14:41:22Z","medicine":"tylenol","dose":"1","temperature":""} | TRUE | promptv_02 |

| 102.9 at 5am | Fri, Jan 17, 9:40 AM (1 day ago) | {"datetime":"2025-01-17T10:00:00Z","medicine":"","dose":"","temperature":"102.9"} | TRUE | promptv_02 |

| temp 102.7 at 9:35pm on 2025-01-16 | Thu, Jan 16, 9:36 PM (2 days ago) | {"datetime":"2025-01-16T21:35:00","medicine":"","dose":"","temperature":"102.7"} | FALSE | promptv_01 |

| temp 102.7 | Thu, Jan 16, 9:33 PM (2 days ago) | {"datetime":"2025-01-17T02:33:20","medicine":"","dose":"","temperature":"102.7"} | FALSE | promptv_01 |

| 20ml of cough medicine at 8:44pm on 1/16/2025 | Thu, Jan 16, 8:46 PM (2 days ago) | {"datetime":"2025-01-16T20:44:00","medicine":"cough medicine","dose":"20ml","temperature":""} | FALSE | promptv_01 |

The notes column I added to track the prompt versions showing when I fixed the prompt from the simple initial version to the longer one with examples. It was easy to check the improvement with prompt iteration based on the info that Gemini extracted.

All in all, a good experience putting this together. What lessons do I take (or re-affirm) from this experience? One is that it is very feasible to rapidly create personalized software - small apps purpose-built to be optimized for your own use case. Simon Willison has written about his experience with building small utilities using AI, and Geoffry Litt has called it malleable software. Another lesson re-affirmed is that with AI assistance becoming more ubiquitous (I used Claude, Gemini, ChatGPT, gpt-4o, gpt-4o-mini, Townie, and Github Copilot in this one project!), there are far fewer of those multi-day stumbling blocks that you run into when coding projects. A good experience resulting in a useful tool that I hope I have very few occasions to use in the future!